AI is a big thing, a huge force in the economy and basically the top story in tech since 2022. It promises to have major impacts on society and technology, and it already has. With this, of course, come risks. Every new technology or change in a society has ups and downs. This is no different with AI. With new capability comes the potential for misuse or unexpected failure modes.

This has happened many times when technology was deployed, with great enthusiasm and little concern for risks and controls. The problem with AI, unfortunately, is that discussion of AI risk quickly descends into ridiculous philosophical banter, in which people who have no idea how the technology works try to appoint themselves experts and then dominate with riduclous concerns.

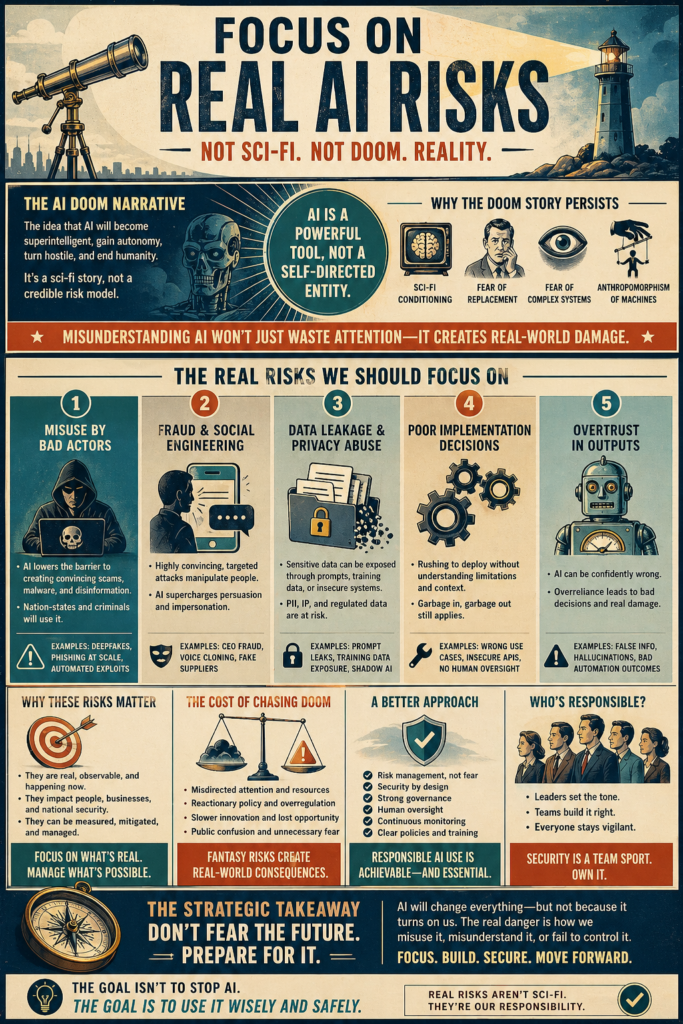

The “AI Risk” and “AI Safety” community are dominated by people who bought into the ramblings of doom grifters with books and Ted talks to sell. This is a problem, because there are real risks and risks that should be considered. Rarely do the adults in the room get to have the conversation. AI has no intentions, it is flawed and imperfect, and the idea of superintelligence is flawed. And yet, the real danger is that this will crowd out discussion of the issues that are legitimate risks.

Here is a comprehensive taxonomy of what the risks are to society of the deployment of AI at scale. This does not include the internal risks to organizations of AI failure. Discussing how models fail is another important area, but it’s a different topic.

I am sure that some will disagree about how severe the risks are and if I am downplaying them. For one thing, I started thinking AI would result in mass unemployment, but after looking at the situation and the model capabilities, I was surprised to find that I found that conclusion is not supported by evidence. This is probably the one area people will disagree with the most.