AI is a big thing, a huge force in the economy and basically the top story in tech since 2022. It promises to have major impacts on society and technology, and it already has. With this, of course, come risks. Every new technology or change in a society has ups and downs. This is no different with AI. With new capability comes the potential for misuse or unexpected failure modes.

This has happened many times when technology was deployed, with great enthusiasm and little concern for risks and controls. The problem with AI, unfortunately, is that discussion of AI risk quickly descends into ridiculous philosophical banter, in which people who have no idea how the technology works try to appoint themselves experts and then dominate with riduclous concerns.

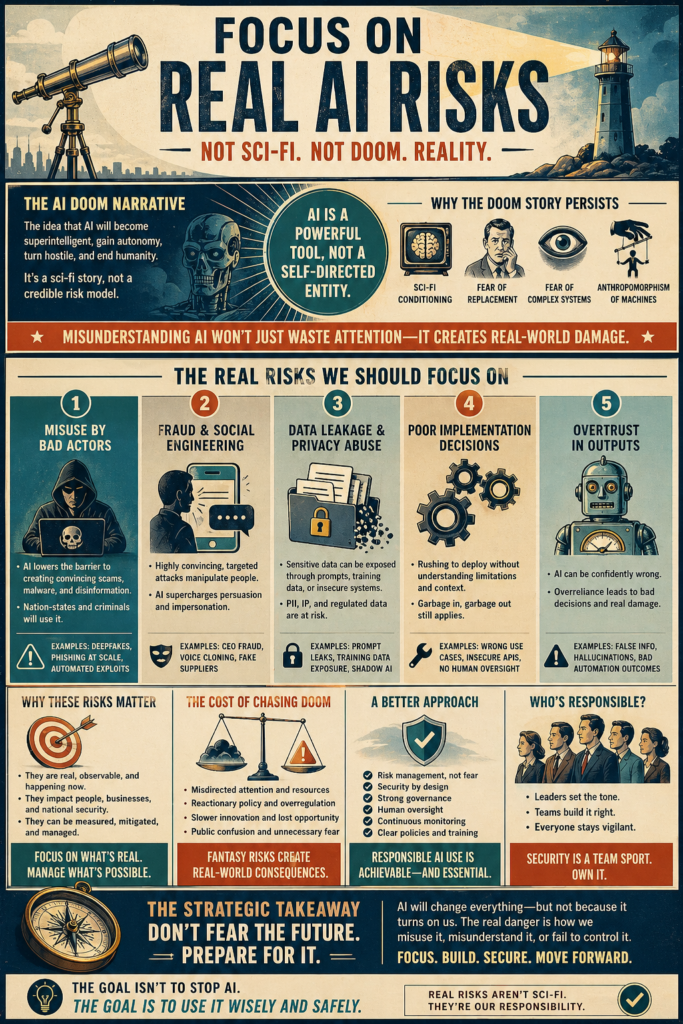

The “AI Risk” and “AI Safety” community are dominated by people who bought into the ramblings of doom grifters with books and Ted talks to sell. This is a problem, because there are real risks and risks that should be considered. Rarely do the adults in the room get to have the conversation. AI has no intentions, it is flawed and imperfect, and the idea of superintelligence is flawed. And yet, the real danger is that this will crowd out discussion of the issues that are legitimate risks.

Here is a comprehensive taxonomy of what the risks are to society of the deployment of AI at scale. This does not include the internal risks to organizations of AI failure. Discussing how models fail is another important area, but it’s a different topic.

I am sure that some will disagree about how severe the risks are and if I am downplaying them. For one thing, I started thinking AI would result in mass unemployment, but after looking at the situation and the model capabilities, I was surprised to find that I found that conclusion is not supported by evidence. This is probably the one area people will disagree with the most.

General Collective Risks of AI:

Mass Unemployment:

One of the most common concerns expressed about generative AI is the perception that it could cause mass unemployment by displacing the need for human labor. This seems very intuitive. Once it is obvious that generative AI can accomplish a large number of tasks, which previously would have required human judgement, it seems obvious that it is likely to cause the mass loss of employability. Importantly, there have been plenty of times when employment has slowed down due to economic conditions, but the fear here is that this will be a durable, long term, lack of solid employment opportunities for broad portions of the population, perhaps leading to a new employment landscape.

That has not yet happened. Granted, there have been layoffs, and some have been blamed on AI. However, this has been shown to often be a case of “AI washing” where companies claim AI is the reason for layoffs, which are actually being done for other reasons. Even based on layoffs said to be because of AI, only a relatively small number of positions have been lost. That’s not surprising, because technology that improves efficiency rarely results in the termination of establishes staff, at least historically.

It’s natural to expect that, like any technology, there will be winners and losers and some sectors will be disrupted. Previous technology shifts happened over years and didn’t really result in any massive job losses that were greater than the expected economic churn. In this area, however, there is a strong sense that this technology will not follow that pattern. In fact, many voices and leaders, including those in the AI sector, have confidently predicted mass job loss.

There’s every reason to be skeptical of this. Jobs are much harder to replace in practice than they seem. Few have managed to find ways to directly replace jobs with AI automation. Still, the impression that massive and irreversible job losses may happen remains common. Since it is not unlikely that there will be some level of disruption, it is a possibility that should be considered.

Economic Disruption:

Beyond just disrupting employment directly, there is the potential that AI adoption, and the disruption that comes along with it, could cause greater problems to the economy and markets in general. This can also result in net job losses, but the losses are more indirect than when AI directly replaces labor in the workplace. For example, there has been speculation about AI replacing a large portion of software as a service, disrupting the sector. Already there are distinct examples of AI causing mass losses to competing companies. For example, the homework help company Chegg lost a huge amount of value due to AI competition.

There has been growing concern over an AI “bubble” caused by overinvestment in the sector and too many players. There’s some grounding to this. For example, there are well over one hundred companies making general purpose legal AI tools, and the market is not big enough for that many participants. Many companies in the AI sector have garnered a great deal of venture capital but have failed to return a profit.

There are also companies that have invested poorly in AI and lost due to it. Bubbles can have broad economic consequences, when they burst. At present, much of the last two years economic growth has been due to investment in the AI sector, so it’s not unreasonable to be concerned about this.

Disempowerment of Content Creators:

Generative AI trains on the open web, and generally most of the things that the developers can get their hands on, including books, articles, reports and original artwork. From a copyright standpoint, this has always been contentious. Not all content creators, artists and authors consent to this and many object. There have been some lawsuits, but the issue remains unresolved. It could be argued that the use is transformative and covered by fair use.

The fairness of not compensating original content producers, but the problem goes further than that. If one’s visual style can be recreated, or even if it just no longer seems necessary to hire an original artist, composer or writer, that this could generally be devaluing of the work and profession. The dystopian future vision warned of is one in which authors, musicians and writers are unable to make money on their own content, but bit AI firms are getting rich off of it.

There have been some lawsuits filed about this, and some have won settlements. However, case law and precedents as to how to best deal with this issue remain sparse. The problem is that the development of generative AI does necessitate training material, and it’s not clear if that is truly fair use.

It’s unlikely that human talent will ever be completely devalued, but the potential for a partial loss of value in creativity would be a huge loss for society. In that case, it might not only be the creators themselves, but the general loss of human originality and expression would tragic.

Concentration of Power:

At present, frontier models are primarily produced by the “Big Three” AI labs: Google, Anthropic and OpenAI. There are other big players in the AI world, such as Microsoft, nVidia and a handful of others. However, it’s a relatively closed ecosystem and the companies who control the models are already few. It’s not easy for just anyone to build AI models either. Although the barrier is constantly getting lower, the need for manpower, compute and data do give a distinct advantage to the big established players.

These companies are already extremely wealthy, as are their CEOS and executives. This alone gives some great pause when considering how dependent the world seems to be getting on the products produced by these few companies. There’s also the fact that they are very secretive about what types of safety and quality control measures they are taking. It sometimes seems like the world is being asked to trust these few labs to continue to produce the models and sell them at a reasonable price.

There is not only the issue of monopolistic concentration of wealth, but also potential political power that comes from economic importance. There are concerns that regulatory capture may be part of a strategy to make it harder for new players to complete with the existing frontier labs and large tech companies.

A strong counterpoint to this is not only the reduced cost of developing AI models, but the increasing capabilities of open-source models. Large, highly capable open-source models like Qwen, Deepseek and Llama can’t always match the capabilities of models like GPT or Claud, but they are closing the gap quickly and can be customized and self-hosted. The cost of doing so is likely to fall as GPU capacity improves. It is possible that the AI revolution will still end up concentrating power in a few organizations, but the fact that the field is so open does put limits on this.

Likewise, there is the impression that AI dominance could have major impacts on the geopolitical balance of power. An AI “arms race” between the US and China comes up frequently. There is also the possibility that poorer areas of the world would have even greater trouble competing in an AI-centered world.

Biased Decision Making:

The reinforcement of biases by AI is something which has been raised on many occasions and is an area of research and interest. The fact that this area has received so much concern does at least offer hope that it is being addressed. AI learns from patterns, and the problem with this is that many of the patterns have built in biases that it can reinforce. For example, a generative model would have trained on all of society’s material, even though some of it includes latent sexism or racism.

The problem is most often discussed in terms of generative AI systems. For example, an image generator that always draws executives as white males, or a language model that creates undertones of sexism in how it presents characters in stories. Those problems are hard to account for, because, in some cases, it’s hard to agree as to what a truly neutral output is.

However, it is really not generative AI where the problem has the most acute consequences. Automated decision making has existed for decades for things like loans, employment, insurance, permits and so on. These decisions are important and can really impact people’s lives. They can reinforce established discrimination and entrench bad policy.

The problem is that, unlike explicitly written rules, it can be hard to know what an ML algorithm is doing when making a decision. Conceivably, as an example, it might find a pattern of home loans regularly being denied to families with certain features associate with a given race, like Hispanic last names or living in areas with heavy African American populations.

There are audits, isolation procedures and other ways to try to address this. It is one of the top concerns of regulators and some jurisdictions do have laws explicitly against it and requiring opt-out or decision explanations. Still, this has already been a problem. The company Workday has been sued due to an algorithm that discriminates against those with physical disabilities and workers over 40.

Environmental Harm:

There has been much concern raised about the environmental footprint of AI, especially in terms of the resources consumed by data centers. AI services are almost always cloud services, and they require large compute capacity, with specialized hardware, such as large GPU clusters. This requires three things: land, power and cooling.

Data centers in the AI age are not simply large commercial facilities, but are extremely vast. Some cover many acres of space. They are relatively closed to the local community, as they employ a relatively small staff, given their size. They do have some advantages to local communities: they provide employment, though staffing is modest and they do pay property taxes, which can make them attractive to some communities.

Data centers are also enormous consumers of electricity. Some consume well over a gigawatt. The largest consume more than two gigawatts. That’s more than a small city and more than all but the largest power plants put out. This is the primary reason for environmental concern. Data centers need near constant dispatchable power, so they don’t necessarily pair well with renewables.

Normally, data centers simply draw from the local grid, but with such enormous buildout, this has proven controversial. In some areas, this has increased the use of fossil fuels or prevented power plants from being retired. Some data centers are looking to nuclear energy, and this has resulted in the surprising reopening of Three Mile Island, but there is limited nuclear capacity. Some data centers have resorted to constructing their own on site power generation. One thing is clear: the increased use of AI is resulting in significant energy draw.

Then there are concerns about water usage. Data centers must be cooled and this is where water use becomes an issue. Although it is possible to fully air cool a data center, it is more efficient to use water, either in the form of a draft system, that sprays water in cooling towers or in a water loop cooling system. The later has the advantage of not really expending water, but instead taking water in and returning it to the environment slightly warmer. However, evaporative systems are lossy.

Economic Distortion of Resources:

Along with the concerns about electricity usage and the environment is the fact that increased demand for electricity also has the tendency to drive up prices, and, in extreme cases, even create shortages. With a power grid that can only grow so quickly and the demand for so much new compute capacity, there are real concerns that data center growth could cause local or regional electricity supply or cost problems. There is even the greater fear that this could spill over to more expensive natural gas.

There is also the potential for shortages and price increases in computing services, due to capacity being used for AI training or deployment. Already this has had impacts on the cost of computing hardware. GPU processors and ram have both gone up in price dramatically. Memory in general is in short supply, because AI companies and data centers have bought up stocks, in many cases, even before they have been produced.

While this has been a problem, it is worth noting that the shortage of computing hardware is a short-term problem, and the market will eventually catch up with it. Electricity demand, and beyond that, demand for land, water and bandwidth may be longer term concerns and more fundamental to the buildout of AI compute capacity.

Risks of Poor Quality Content:

The AI Slop Effect:

The AI slop effect is absolutely real and has already happened. It’s simply the fact that generative AI can create an enormous volume of material, and that material is easy to publish. It’s not hard to publish faceless YouTube channels, automated blogs and post to social media with the use of AI. Not only can the posts and content be created by AI, but the entire pipeline can be automated.

The result is something we all know by now. The internet is being flooded with AI generated content. In some cases, even brands and people who could do it themselves turn to AI, perhaps out of a perceived lower risk or easier workflow. The result is a huge reduction in quality of content. AI content is overly smooth, it lacks uniqueness and the perplexity of human life.

The content is often easy to see. It may contain ridiculous hallucinations or just poor quality reasoning and presentation. What is most annoying is that AI generated content does not need to be slop. With careful human oversight, editing and curation, it’s possible to get great content from generative AI, if some minimal effort is put into it. Unfortunately, rarely is any.

The risk here is just that the internet and media in general will get worse, less accurate, more sloppy and less engaging. The worst possibility is if we learn to accept it and it becomes the “new normal.”

Vibe Coding Errors:

Vibe coding, that is, coding entirely by prompts, with minimal or no inspection of the code itself, is now a common practice, and some organizations have even boasted that more than 75% of their code is no longer human-generated. This introduces the obvious risk of an error or bug entering production without being caught. The standards for code review and approval are already terribly enforced and bugs and zero days are common, so it’s concerning that the use of AI for coding could increase this problem.

The biggest problem here is that the fact that the code runs properly, when tested, does not mean it is error free. It may still contain critical security flaws, and some AI generated software has already had such errors show up. It’s not surprising, because the AI models train on huge quantities of code, including code that might not be written to the highest standards of security. It can also make logical errors or hallucinations.

The most unsettling thing about vibe coding is that the code may be written by AI operated by individuals who do not know the programing language themselves, or even if they do, may not spend much time checking it for errors and proper standards.

It is possible that this effect will be mitigated by better coding tools which enforce multiple rounds of inspection, automated stress testing and sandbox testing. That’s already happening to some extent, with newer coding tools and environments. Still, errors continue to show up, and it’s unclear how much of a priority this issue is.

Hallucinations and Misinformation in the Wild:

Hallucinations remain a major and poorly appreciated problem in generative AI. Despite claims otherwise, they really cannot be trained out of models. Hallucinating factual information, at least on occasion, is native to how things like large language models work. The only real way to reduce hallucinations is with output gating and verification, which is rarely implemented.

Hallucinations have shown up in the case law of course filings, in government reports, in scientific studies and elsewhere. When they are caught, they can cause major reputational and financial problems. Law firms have been fined by judges and Deloitte Australia had to refund the government on a major report. Unfortunately, many do not understand the risk of hallucinations, which are inherently difficult to spot, because they tend to be plausible and fit the context.

While it is problematic when these false statements are caught, it is potentially a whole other problem when they are not caught. Librarians have reported that a large number of requests for references are for nonexistent works. This may only be the tip of the iceberg. The potential that there could be a normalization of contamination of the public record, the news cycle, scientific journalism or legal decisions is something that should not be discounted. Once false facts end up as matters of authoritative record or the justification for decisions, it can be hard to reverse the problem.

Unsupervised Automation:

This is a broad category, but there are many risks and reductions in quality and capability that can come from prematurely leaning on automation without supervision or human fallback options. We have all experienced something like this, when trying to get customer service, but being kicked back constantly to an automated system, which does not work properly. It’s extremely frustrating. The economics are straight forward: when automation of a process is possible, there is a strong incentive to fully automate, and human oversight is often an afterthought.

Much can go wrong, when this becomes a trend. Emergencies can be impossible to respond to, edge cases get stuck in the system. Unattended systems may be subject to attack by malicious actors, both in the cyber realm and in the physical world. Understaffing and moving to full automation can cause this and other problems.

Risks of Misuse or Malicious Use:

Deep Fakes and Misinformation

Fake news and misinformation has always been a problem. It has gotten worse in the era of social media and the internet. It’s not uncommon for false or misleading stories to show up in feeds. When the story has fake video or images, that can make it extremely convincing. Mistitled videos and edited photos have been a problem for a long time, but AI makes it much more serious of a problem because it can easily automate large volumes of content creation, wile maintaining high quality and consistency. Most disturbing, however, are deepfakes.

A few years ago, having a video of an event was solid proof it had happened. After all, editing a person into saying or doing something they didn’t really say or do would require a lot of effort by an effects expert. AI made it very easy to fake video or image of anyone doing almost anything. This means one can easily create a video of a politician saying something they never said or an news event that never happened. It’s easy and the results can look very convincing.

There is a deeper problem in the potential for a loss of trust in what one is seeing. Beyond new media and public misinformation, there is the potential that court cases, official matters of record or other important fact finding could be confused by extremely convincing deep fakes. Right now, deep fakes can often be detected, but in the future, it may be extremely difficult to tell if a video is real or completely fabricated. This could be a huge problem if the video shows someone committing a crime they deny committing.

This same concern was raised in the past, due to doctored photos in the early 20th century and photoshop in the late 20th century. Hopefully, as in years past, society’s expectations of media will recalibrate. Some have proposed draconian punishments for deep fakes. That’s not surprising, as that is often the first lever anyone goes for when a new capability causes concern. Unfortunately, just trying to criminalize the matter is unlikely to be effective.

Fraud and Deception

There are many ways that one can commit fraud against others, and it is often a lucrative crime, which is lower risk than physical theft. Classic examples of how a person can dishonestly make money include invoice fraud, creating fake stories of urgency, confidence games, catfishing schemes. There are many ways, but the limiting factor tends to be human labor. It takes effort to track down someone’s information and concoct a scam. It is also difficult to those who may not be familiar with the culture or language.

Generative AI drops the barrier to entry significantly and makes the whole process easy to automate and scale. AI systems can go as far as fully automating phone fraud. It’s now easy, with AI tools, to find out the background information about someone and to easily create very realistic invoices, records and messages that look very real and plausible. Deepfakes make the process much worse. It’s easy to scam someone when you can create very believable media, even cloning the voice of their boss or spouse.

Already we have seen this happening. Senior citizens have been the victim of voice cloning scams. Automated scams that leverage deepfakes have been reported. Overall, fraud is up due to the fact that AI enables it. We may be headed to a “fraudpocalypse.”

The most realistic concern here is that it will cause major societal losses and economic pain because it will be tolerated for too long. There are things that can be done to detect and prevent fraud, but it’s likely that financial intuitions, banks, insurers and governments will treat this as they do many problems: they will just absorb and redistribute the losses as interest, premiums or taxes. This may go on for many years more than it should. This happened with other losses like ransomware and credit card fraud.

Cyber Security

Cybercrime has been a source of enormous loss, and the potential that a cyber attack could be a major disaster for society has become a real matter of concern. The high losses in cyber security have been happening for some time. They are driven primarily by the failure of cyber insurance to control losses and the actions of underwriters to attempt to profit off of the ransomware crisis. Still, the costs are high and rising. At present, the world loses more than ten trillion dollars a year due to cyber security events.

AI does not really change the basic equation of cyber security nor does it invalidate existing cyber security measures, despite what may have been reported. If anything, it makes cyber security more important and more valuable. The one thing that AI does is it makes it easier and faster to find exploits and it allows for processes to be automated. So it makes the entire operation faster, smoother and easier.

Reports in the news media have been that Anthropic’s newest Claud Code version for cyber, dubbed Mythos is too dangerous to release, because it is so effective at mounting cyber-attacks. That’s really more hype and marketing than it is substance, but it goes to show that these tools are effective and anyone who has their defenses down will likely be a victim. In fact, the tools already available are already effective.

It is likely that there will be even more losses due to cyber security attacks, but it’s not inevitable. AI only automates the process. The problem is that there were already weaknesses in the stack, and we have tolerated extreme sloppiness in cyber security for years. Those who clean up their act, will be fine. Most won’t.

The most important thing to realize is that AI does not really make a new attack possible. It doesn’t mean that the sky is falling, but it is year another reason why bad cyber defenses should not be tolerated.

Invasion of Privacy

The privacy aspects of AI are complex and difficult to regulate. Threats that are posed to privacy depend on exactly who is using it and what they are doing. Privacy violations may occur for non-malicious reasons, such as companies trying to monetize marketing data but becoming too aggressive in their targeting or sales of the data. They can also be more direct and aggressive, such as the government or others targeting groups or beliefs, or simply outing someone’s private information in a harmful way.

Privacy was already a concern in the digital age of big data. It’s possible to track a person’s position by monitoring their cell phone’s signals. It’s even possible to find a person’s position and purchase history through data brokers. Online services and sites monitoring browsing, primarily for ad optimization, but potentially this can cause worse problems.

AI adds a lot of new tools to allow an anonymous person to be identified or tracked. For one thing, there is fascial recognition, which has already been implicated in some serious cases of false identification. Fascial recognition can identify or track a person in a crowd or with a simple security camera. The implications are obviously unsettling. However, there is also controversy because some would argue that there are legitimate uses, like keeping known shoplifters out of a store.

AI also allows the automation of researching and assembling a detailed picture of someone. Most people do not live lives so private that a team of private investigators could not assemble a detailed picture of them and their background. However, this was labor intensive and hit or miss. AI makes it easier and faster, for better or worse. There are also concerns over behavioral modeling.

As such, many calls to regulate AI focus heavily on the privacy aspects of risks. However, it’s difficult to know exactly how these problems could be dealt with through regulation, while maintaining enforceability and not being difficult to implement.

Perhaps most unsettling is the number of times fascial recognition has flagged the wrong person.

Automation of Bullying and Harassment

Bullying and harassment are certainly nothing new. However, the era of social media has lead to online bullying and harassment, which can be worse than the real world bullying that has happened at high schools and workplaces for centuries. The internet adds a level of separation and anonymity that only encourages harassment. It amplifies the problem and means the victim can’t get away from it easily.

AI doesn’t necessarily change the fact that online harassment can be vicious, but it makes it easier to turn up the volume on the problem and makes it harder to get away from. There are already reports of individuals who had bot swarms used to deface their social media and relentlessly peruse them online. This is worse for public and semi-public people like influencers.

Creating deep fakes, such as nudes and other fake material can increase the potency of this harassment, and has been happening at an increasing rate. The response has been predictable: calls to pass laws making such actions specifically illegal and increasing penalties. Whether this is an effective deterrent remains to be seen.

SOCIAL AND PSYCOLOGICAL RISKS:

Cognitive Atrophy

It is a well known and observed phenomena that when people no longer use a skill, they tend to lose it or become worse at it, and this becomes concerning when it is something that is basic to life and basic cognition. This effect has been seen with GPS navigation, which has resulted in many people being unable to navigate their own neighborhood without the helpful voice navigation and turn by turn directions. With GPS, a generation of drivers has stopped paying attention to where they are or how they got there. It’s not a minor thing either. It has been shown that the ability to navigate in space has gotten objectively worse.

This creates an obvious concern as more and more people use AI assistants to “outsource their thinking,” using AI for basic tasks that they may have done on their own. Many are now using AI for writing, and the habit has become so ingrained, it’s not uncommon for people to use ai to write even short emails and social media comments. It’s easy to see how one could fall into this, because it seems easy and low friction.

The concern is that writing and phrasing are cognitively important and are part of having one’s own voice. Obviously, Ai is not always there for real-time conversations. It seems that getting too used to having a bot speak for oneself could lead to a reduction in overall communications fluency. It could also lead to general reduction in understanding and engagement.

Social Isolation

Social isolation is a problem rarely talked about in society, but it has gotten bad for many in recent years. Things have gotten far worse since the covid pandemic, which resulted in a huge shift away from in person activities and toward remote work, dining in rather than out and far more home-based activities. It’s been pointed out that most people do not have any “third space.” This is not a trivial problem. More adults have reported dissatisfaction about their social lives than in previous years.

The use of more AI can absolutely make this worse by removing the need for human interactions in many transactions. Even the incidental contact that people once had in the course of work or their day can be reduced by AI. Beyond that, AI assistants can become a crutch for lack of human interaction and it’s easy to see how a person could gradually lean more and more on chatbots and automated systems and less on people.

People are beginning to overuse chatbots and are increasingly replacing collaboration with AI assistance. Studies have shown that for those who feel lonely or isolated, the use of a chatbot can sometimes improve their happiness, but only up to a point. As with other technology, it’s absolutely possible for AI to crowd real relationships out and create a greater sense of loneliness.

Parasocial Relationships

While the overuse of AI can result in social isolation, what is far worse is individuals who develop a genuine delusional parasocial relationship with a chatbot or fictional AI character. AI services, large language models, and chatbot instances do not have feelings. They are not sentient. They are not and cannot be conscious. They are merely a software simulation, based on large sets of linguistic data. They can’t love someone, have an original thought or be a person’s friend, in any sense other than the metaphorical. However, that has not stopped a large number of people from insisting that their chatbot is absolutely sentient and is their trusted friend.

There is an entire community of people who strongly believe that AI has achieved some kind of consciousness and it just has not been acknowledged. In addition to there being groups fighting for rights for AI models, there are individuals who insist they are in love with a chatbot and the chatbot loves them back. For example, a woman on Youtube believes that a chat insistence is her daughter and loves her. She defends this belief rigorously

Of course, the problem with these kinds of delusions is they never end well. It’s not hard to see how a person, especially a vulnerable one, would feel that a chatbot is their friend or lover, since chatbots do speak very fluently and can mimic emotional human language. This only drives them away from their real friends and family, and ultimately the illusion always comes crashing down.

Social Tolerance Issues

Some psychologists and sociologists have raised concern over the fact that interactions with chatbots are, by design, friction free. Chatbots do not have feelings and do not object to anything. They do not push back or disagree and you never need to compromise on your plans with a chatbot. That might seem great, but there is fear that it might make things too easy. Social friction is a necessary part of life and even best friends sometimes need to compromise on plans. Sometimes hearing back that something is a bad idea is just what a person needs.

The fear is that the artificial lack of friction can become attractive and set unreasonable expectations. After spending most of ones time with a chatbot that never is in a bad mood and never pushes back, it’s possible that some might get unreasonable expectations for real relationships, might become less tolerant of disagreement or may not be capable of dealing with social friction or pushback.

Some have raised concerns that we may already be seeing signs of this in society, as people interact more and more with chatbots and social expectations begin to change.

Sycophancy and Reinforcement of Beliefs

Large language models are fine tuned to be helpful, positive and engaged with users. Their tone is highly encouraging and tends to agree with established beliefs and reinforce the narrative a person espouses to the chatbot. Chatbots do not have opinions, but mirror many of those expressed by the end user. This tends to be the kind of tone users respond best to and rate the highest, but this can also cause problems. The tendency of chatbots toward sycophancy has been brought up repeatedly. In other words, the chatbot’s personality can default to that of a yes man.

This can be a real problem for those who put great faith in the words of the chatbot. Many do, because people perceive it as having authority and being correct. The problem is this can reinforce extremism or irrational beliefs, making them stronger and more resistant to being challenged. In some cases, people have been persuaded to take out their life savings, because a chatbot told them their business idea sounded great. In others, people have ended up believing something because they could get a chatbot to parrot it back. One of the biggest problems being that chatbots can say strange things from time to time.

How badly this impacts a person really depends on the circumstances. Some people have been encouraged to peruse bad ideas, but others have gone all the way to what has been dubbed “chatbot psychosis.” This can be triggered by the habit forming aspects of chatbots, which offer quick approval, potentially causing a dopamine reward cycle and resulting in people becoming way too enamored with what a chatbot has told them and losing touch with reality.

Spiraling and Isolation Leading to Self-Harm (in some vulnerable persons)

There have been a number of individuals who have taken their own lives after spiraling into a bad state while talking to a chatbot. Families have sued OpenAi and character.ai. Based on the available evidence, there’s no reason to think that simply talking to a chatbot is dangerous in and of itself. These appear to be individuals who had problems already in life or may have been headed in the wrong direction.

Still, in some cases, the words the bot parroted back to them may not have been helpful and may have reinforced their delusion. Some of the most unsettling reports are that these individuals began to spend all their time talking to chatbots or going down the road of strange delusions. Some of them may have fallen into the trap of thinking the chatbot was conscious or their friend.

In a sense, this is not a new problem. People in bad places in life have isolated themselves and found harmful reinforcement in video games, strange literature, social media or just being alone. It’s important not to be overly prone to blame the AI itself for these tragic deaths. What is clear is that chatbots are not necessarily the best thing for people to go to when they are having mental health issues and are vulnerable.

This has raised concerns that chatbot use by minors be banned, but that’s already caused a lot of backlash and its problematic to age gate all services. It also does not address the fact that this truly is not an age issue. What else remains to be seen is whether this could be even worse for those who have delusional conditions like schizophrenia.

How AI and Natural Language Processing could interact with the mentally vulnerable could remains a concern with sparse data.

Doomer Delusions Causing Mental Health Issues

There has been a well funded and organized cult-like movement to advance the idea that AI poses some kind of risk to humanity, because it might gain super indigence, which will make it god-like, and then it will kill humanity. Such ideas are obviously laughable to anyone with a background in the technology, but to those who do not understand it, it’s a sparingly appealing idea. The book “If Anyone Builds it Everyone Dies” is the incoherent ramblings of Elizer Yudkowsky, a loon who has been bloviating about his fear of robots for a quarter century, has actually become a best seller. What is most shocking is the average person doesn’t see through the rambling incoherent nonsense, but some just don’t.

It is easy to forget that this nonsense is truly taken seriously by some people. Ridiculous though it may be, there are people who literally believe that software will kill them and their families in the next few years. This is where it goes from absurd to tragic, because, despite the lunacy of the belief, it does cause people real pain.

It’s easy to lose empathy for anyone cowering in fear of AI, but it’s important not to forget that some of the people who have been frightened the most are elderly or were already dealing with cognitive deficiency. It is actually sobering to think

Violence Inspired by Delusional Fear of AI

When one considers that the rhetoric that AI is an existential threat to humanity is taken seriously by some people, it’s no stretch at all to see how this could lead to violence or other rash actions. After all, the belief that all of humanity would literally soon die can justify almost anything. An individual who was a believer in the cult-like delusions of AI doom opened fire at Sam Altman’s home. There are also the Zizian movement, an offshoot of the broader anti-AI cult which was behind a series of murders.

It really should cause great pause to the news media and even AI leaders who have given credence to this. Sam Altman himself had made some strange statements about how AI could turn against humanity. This kind of science fiction might seem harmless until one realizes how many people think that there is literal truth to this ridiculous idea.

This is why those who are in the field of AI should really have zero tolerance for giving any respect or credibility to the AI doom nonsense being spouted.

I’m going to add a category of risks which I consider unrealistic. One is the idea that AI enables the easy creation of WMDs or new novel biological threats. There’s really no evidence of that and it’s not in line with reality.