AGI, ASI and the extreme confusion of it all

There has recently been a huge amount of confusion over the concept of Artificial General Intelligence, AGI, and exactly what it means, whether it is something that should be expected, and what it means for society. One thing that is seen frequently is speculation of the “Race to AGI” or questions like “How will we know when we have AGI” or “What if they already have AGI and haven’t told anyone?”

This whole line of reasoning, the way it is framed, and the questions being asked here indicate complete incoherence about what AGI or Artificial General Intelligence is, or at least what it is supposed to be. If that is not bad enough we now are being told that AI is close to “super intelligence” or “ASI.” This is an entirely fictional idea, and nobody can even agree as to what it is, other than it might be scary.

The Basic Idea of AGI

The concept of artificial intelligence in the form of a fictionalized “thinking machine” goes back centuries. The modern concept of computer systems that simulate intelligent behavior dates to the 1950’s. As systems dubbed AI were developed, it was clear that they were relatively narrow and bounded in what they could do. Machine learning and cognitive simulations could optimize systems and respond to variables, but they lacked the kind of “intelligence” that we think of in a human.

Intuitively, it was always clear that there existed a higher level of “general intelligence” of the type found in humans and other thinking beings. In the simplest sense, an AI that could be communicated with, like a person and could understand human-like concepts, like situations being subjectively better or worse. It made perfect sense that the mental model for what general intelligence would look like would be a synthetic human mind.

The terms for Artificial General Intelligence versus Narrow Artificial Intelligence was coined in 2007, but the basic concept goes back much further. It had been often called “strong AI,” “human-like AI,” “full AI,” or “true AI.” In fact, this distinction became obvious early in the field of AI, when it was clear that systems that could mimic certain aspects of human intelligence were distinct from the popular nation of a fully digital mind, or anything like human level capabilities across domains.

Sentience, Agency and Autonomous Goal Formation Are Natural Assumptions of Intelligence

What exactly is intelligence? Up until recently, the only real mental model anyone had for true intelligence was the mind of a human or an intelligent animal. There have always been large and complex systems, with advanced automation, but autopilots, thermostats and other unattended technologies are never really thought of as “intelligent” in the way that humans are, regardless of the complexity of their actions. These systems do not have preferences, so they don’t actually “choose” what to do. They are only doing what they are designed to. Therefore, we might call them “dumb.”

Therefore, when one talks about intelligence that “can do everything a person can” or “human like intelligence,” it’s hard not to think of such an entity as having the same kind of preferences, self-direction and internal agency. By extension, it makes perfect sense that the only mental model one would have of such an entity would be a human mind. This naturally leads to questions like “could it have emotions” or “might it feel the way a human does?” or “when does it become a mind?”

Science fiction had prepared society for this idea. Science fiction is, after all, the only place where expectations, hypotheticals and definitions could be explored. Robots and artificial intelligence with strong personality, emotions, character flaws and desires is a standard fixture in science fiction. The idea that AI might turn evil is also a common trope. Characters like the Hal 9000 and Lt. Commander Data or R2D2 become feared or beloved. Clearly, this is primarily about telling a compelling story. A fully mechanical system with no personality, which can still replicate human capabilities can’t be much of a character in a story.

So based on the mental model for intelligence we have and he science fiction stories we have been primed with, it’s perfectly natural to anthropomorphize AI.

AGI in the popular mindset has always been equated with being a mind or a self-directed being.

How NLP broke the expectation

Speech, and being able to hold a conversation has always been held as the hallmark of true intelligence. This goes back to the time of the Turing test, in which a computer attempts to mimic a human in conversational fluency. Natural language processing (NLP) has also long been an area of research in AI, with things like verbal interfaces seeing a great deal of attention, over the years.

There is solid reasoning to this approach. In general, a person’s ability to understand something can be judged by talking to them. If you can process language, you can accomplish most administrative tasks. Language is how humans interface with each other and how abstract information can be shared. Language is the most compressed form of cognition, but language can also be used to solve problems through “thinking out loud” through problems.

It certainly seemed natural that if a computer could have broad, open-ended conversations with someone, especially about abstract and human-centric things, that must be intelligence in a very general and human way. This seemed entirely reasonable.

However, large language models did something that was completely unexpected. They managed to achieve a high level of verbal fluency and could process language in a way that seems very much like a human. They can talk about a huge number of subjects and maintain coherence. However, they didn’t do this by achieving true world understanding and experience based intuition. Instead, they gained the contextual knowledge by parsing the entirety of human text available. They’re pre-loaded with huge amounts of knowledge from human text.

LLMs may be able to simulate intelligent conversation. They may even be able to simulate cognative reasoning, but the fact is, they do not have true cognitive capabilities. They do not understand the world and are unable to know if they output even makes sense. They’re sophisticated mathematical equations, but not smart in the way a human is.

There are many things a human can do which LLMs can’t. LLMs can’t engage in true special reasoning. They struggle with non-linear logic or anything with hidden variables. LLMs hallucinate because they don’t actually have any ground truth. LLMs can’t learn on the fly. They can’t even really do math properly, at least not without external tools.

So, no, LLMS are not truly “intelligent” in the human sense.

In 2001, a space odyssey, the HAL 9000 runs the ship, communicates with the crew in natural language and makes complex decisions. Although it would be architected differently, and would not malfunction as is shown in the movie, today it would be 100% possible to build a system that does everything the HAL 9000 is shown doing in the film.

Are LLMS AGI?

Short answer: No, not the way AGI is currently defined.

However, this is a more interesting question than it might seem. The simplest answer is no they are not, because they do not have the full spectrum of human cognitive abilities.

However, the question really depends on the definition of AGI. There has never been a formal, testable definition of what AGI is. Language models do not have a narrow use case. Because they can do so much, they could be called “general purpose” and that is enough to qualify them as AGI by some definitions.

Large Language Models do seem to meet the criteria for what would have been considered AGI at one time: They are general purpose, and they can simulate some amount of reasoning, even if its hit or miss. By many definitions, it would qualify. Of course, that doesn’t mean, it’s magic, but only that it fits that definition.

But we seem to have decided that language alone, even if it’s very general purpose, does not constitute AGI.

Then what is AGI?

“Human like” capabilities seems straight forward, but because AI is a completely different strata, it’s not so simple. There is no formal definition of what that means or which capabilities are important and which are not. The concept really has always been based on multiple interpretations. It’s not even clear whether all human like capabilities need to be replicated at full human fidelity level. There’s also the fact that capabilities vary from person to person.

So it’s not clear at all what AGI would be, in practice, other than it would be “general.” The goal posts keep seeming to move on this. At one time it meant that it could do most of what you’d need a human for, at a base level. Now, however, it is being stated that AGI is better than humans in every domain. It is therefore impossible to agree on if AGI has been achieved, until there is a definition.

Full Human Capability AGI:

If we are to define AGI as full human capabilities, then we are a long way off and can’t actually get to AGI with machine learning. It’s a much greater problem. It is important to understand how ChatGPT, Google Gemini and other deep learning models are pattern recreators. They have huge limits. LLMs are not capable of actual human cognition. In fact, no machine learning model is. They can only recreate patterns.

Humans on the other hand, can imagine complex 3D worlds, reason over different domains, remember their own emotional state, question their own thought process and so on. People have a “minds eye,” and an inner dialog. As a person walks down the street, they are receiving the world in high resolution stereoscopic vision, feeling the air on their skin and perhaps wondering what they will do later.

Even if the goal is only to make a system that can do everything a human can, even if it is not internally using a human mind, we still run into some major road blocks. In order for a system to have full human capabilities, it would obviously need a full episodic memory. As of now, there’s no way to do that with an ML model other than to have an external memory buffer. It works well enough for accomplishing tasks, but it means that there is no way to really have a system that can operate with the capabilities of a human.

Then there is the problem of learning. Machine learning is fundamentally different than human learning. Deep learning models can’t really learn and modify themselves the way a human can. Training requires dedicated training runs. This is the “life long learning problem.” It’s a major deficient in ML capabilities. The problem is neural networks can’t be updated without the risk of behavioral drift and catastrophic forgetting.

Then there is the fact that no system based on current technology is actually capable of self-instantiating and none have goals or independent agency. You can’t just bolt this on either. It’s inherent to the workings of the models. It could be put into a prompting loop, like an agent, but does that really count as a full spectrum human being?

Then we have the problem of bandwidth and processing. Large language models use language, the most compressed form of human cognition. Yet LLMs still use some of the most powerful GPUs on earth, so it’s not likely that we are anywhere close to having GPUS that can simulate a human mind or anything near it.

In conclusion, it’s not just that current machines are not human level capable. We’re also not on the path toward that. We have no known architecture that could achieve that. Finally, it’s not even like building a full human emulator necessarily has economic value, since prompt-based tools are already highly capable.

The fact that we have token predictors for language may have gotten people to start thinking “AGI is right around the corner,” but the two are unrelated. We are no closer to AGI now than we have ever been, at least if it is truly human level in all domains.

Functional AGI

There are also definitions of AGI which hinge on the ability of the system to do tasks or be economically valuable. This is where it gets confusing, because one could also define AGI as a system that can do a broad variety of tasks with the basic competence of a human, at least within bounds and over a reasonably short period of time.

It seems entirely reasonable that AGI would not need to beat a human in all things. After all, other technology does not need to meet perfection to be recognized. If the definition leans into the “general” aspect of AGI, then AGI could be defined as a system which, if given an arbitrary task, can accomplish it, reliably, planning and executing all steps and doing it reliably. Such as system would not need to br brilliant, but only have the capabilities of a competent administrative assistant.

For example, an AGI assistant could be asked to “Check my account and move some money to checking if it’s getting low. Schedule a vet appointment for my dog. Clean up the pile of photos from my vacation and select some good ones to post.” Such an open-ended list of tasks, in many domains, being accomplished reliably, is starting to feel like the AI we had all dreamed of. So, it’s reasonable to call such as system AGI, even if it does not meet (or come close to) full human capabilities.

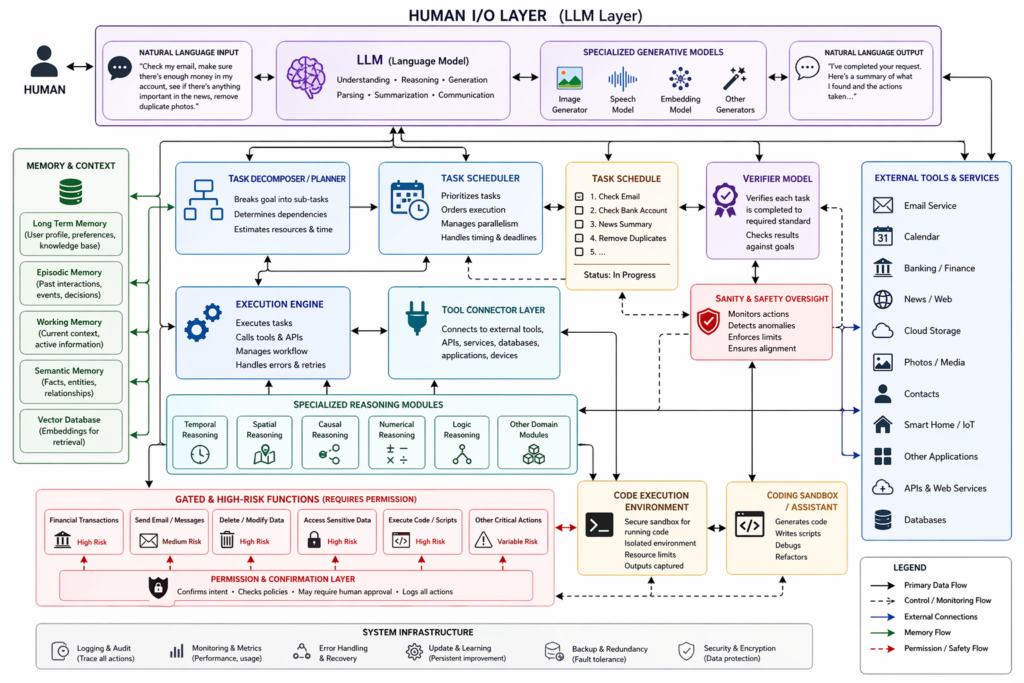

Such a system would not be arrived at entirely through machine learning. It would necessarily be a system of systems, with language processing, task scheduling, memory, supervision functions and connections to other systems. It would have to be engineered for reliability and security. That said, in principle there’s no reason that can’t be done.

If “AGI” means that it can do most of what a human does, then it is likely we will reach this level in a few years or less. Already agentic frameworks are attempting to expand to more capabilities.

What functional AGI might look like (As illustrated by GPT)

Superintelligence:

Superintelligence or ASI is a term which recently has been making the rounds. It’s been talked about in the media, by AI leaders and others as if it is something that is coming or to be concerned about. The term was invented by Nick Bostrom, a philosopher with no background at all in data science or cognitive science. It is not a concept based in science and what it is remains a moving target. It is often described as “god like” or something that would be trump card on the laws of reality.

It’s not a valid concept, but rather a term embodied with magical thinking. No amount of development of AI turns it into a god or even gives it intentions. If AI gets better and more sophisticated, it will have longer context windows, it will be able to code more complex software, it will connect to more systems, efficiency improves. The dialog around the mythological idea of ASI is not useful.

Of course, computers are already much faster than humans. Computers are infinitely better at math and information lookup. The problem with this entire line of thinking is that it is based on the recurrent problem of treating feed forward functions as living, self-deciding minds.

This is what happens when philosophers and futurists are leading the discussion on a matter that should remain technically grounded in reality.

Intelligence does appear to have limits. Idiocy can be carried to infinity.